Summary

CRO data backlogs don’t form because teams ignore data; they form because data systems are designed too late. By the time an organization attempts to structure this data, inconsistency and hidden errors make clean integration difficult and expensive.

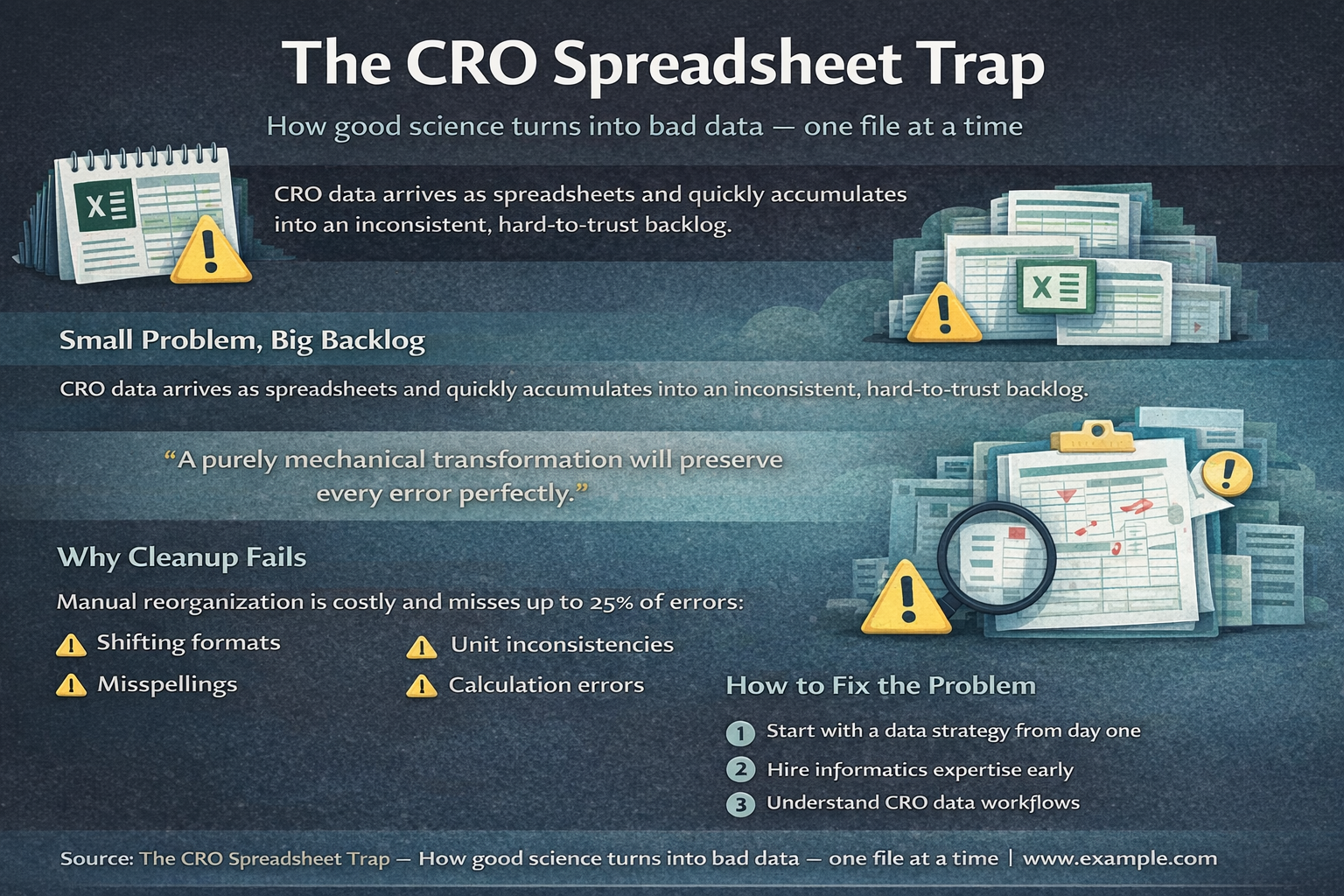

There is often a quiet assumption that CRO data will eventually “get organized.” In practice, the opposite happens. What begins as a manageable flow of spreadsheets quickly compounds into a massive backlog. I recently worked with a team that had over two years of historical data manually created in spreadsheets. Every analysis required reconciling conflicting formats and patching together a version of the truth. The root cause is fragmentation. Each CRO delivers data in its own format, and even within a single vendor, reports vary by experiment or template. While it seems like a simple matter of standardizing spreadsheets, the real issue is trust.

The Failure Mode of Spreadsheet Data

When you dig into these datasets, you consistently find:

- Layout changes that break automated parsing

- Misspelled species names that corrupt analyses

- Shifting units between reports

- Calculation errors

“A purely mechanical transformation will preserve every error perfectly.”

To solve this, you need a systematic way to normalize data across contexts and a rigorous curation process that surfaces anomalies before they reach your scientists.

The Cost of Reactive Management

I’ve seen companies attempt to “brute-force” this by paying CROs to manually structure historical reports. Sometimes at costs exceeding $100 per report, and this only to find that up to 25% of the “cleaned” data still contained errors. You end up paying twice: once to organize the data, and again to fix what was missed. This is the core failure mode of spreadsheet-based CRO data: errors are easy to introduce and hard to detect.

“Manual data cleanup does not guarantee data quality.”

A purely mechanical transformation of data, however elegant, will faithfully preserve existing errors. Effective data management requires a more comprehensive approach, which includes:

- Systematic Data Capture and Normalization: This involves understanding the meaning and relevance of data across various experiments, vendors, and contexts rather than simply reformatting it.

- Rigorous Data Curation: A process that identifies inconsistencies and flags anomalies, which is essential to provide scientists with the confidence they require to make informed decisions.

Without both components, the result is the migration of messy data into expensive data systems. This approach incurs significant unnecessary high cost, as one essentially pays twice. Once to organize the initial data, and subsequently to address what was missed in the first instance.

“By the time you’re cleaning up your CRO data, you’re already late.”

How to Break the Cycle

- Data Strategy on Day One

- Technical debt begins the moment you generate data. A robust platform is a necessity for science at scale, not a luxury.

- Informatics Expertise

- If this expertise isn’t in-house, bring it in before the backlog forms.

- Acknowledge the Gap

- CRO systems are optimized for their workflows, not yours. Bridging this gap is essential for long-term usability and traceability.

Until that gap is acknowledged, it will keep creating friction.

This Is a Timing Problem, Not Just a Data Problem

This issue is not merely a spreadsheet error, but rather it is a fundamental challenge associated with systems and timing. Most organizations only realize that they have a data problem once it has become unmanageable. A backlog is rarely created intentionally. Rather, it develops when teams delay defining how their data should function. As such, without a proactive strategy, data management processes are eventually dictated by default through the incremental use of disparate spreadsheets.

“The question is when you choose to deal with it.”